Indice

Algorithm Engineering A.A. 2019-2020

Teacher: Paolo Ferragina

Teacher: Paolo Ferragina

CFU: 9 (first semester).

Course ID: 531AA.

Language: English.

Degree: Master degree in Computer Science and Master degree in CS&Networking.

Question time: Monday: 15-17, or by appointment

News: about this course will be distributed via a Telegram channel.

Official lectures schedule: The schedule and content of the lectures is available below and with the official registro.

Goals

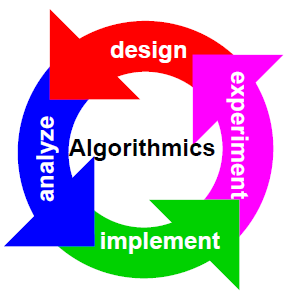

In this course we will study, design and analyze advanced algorithms and data structures for the efficient solution of combinatorial problems involving all basic data types, such as integers, strings, (geometric) points, trees and graphs. The design and analysis will involve several models of computation— such as RAM, 2-level memory, cache-oblivious, streaming— in order to take into account the architectural features and the memory hierarchy of modern PCs and the availability of Big Data upon which those algorithms could work on. We will add to such theoretical analysis several other engineering considerations spurring from the implementation of the proposed algorithms and from experiments published in the literature.

Every lecture will follow a problem-driven approach that starts from a real software-design problem, abstracts it in a combinatorial way (suitable for an algorithmic investigation), and then introduces algorithms aimed at minimizing the use of some computational resources like time, space, communication, I/O, energy, etc. Some of these solutions will be discussed also at an experimental level, in order to introduce proper engineering and tuning tools for algorithmic development.

Week Schedule of the Lectures

| Week Schedule | ||

|---|---|---|

| Day | Time Slot | Room |

| Monday | 9:00 - 11:00 | L1 |

| Tuesday | 11:00 - 13:00 | L1 |

| Wednesday | 11:00 - 13:00 | L1 |

Exam

| Dates | Room | Text | Notes |

|---|---|---|---|

| 19/11/19, 11:00-13:00 | (First Midterm) | text, solution, results. | Students that got a rank >= 16 can participate to the second midterm exam. |

| 17/12/19, 11:00-13:00 | room on exam's site (Second MidTerm) | text, solution, results. | Writeups can be examined Thursday 9th January at 9:00 in my office. Score “30 e lode” is assigned only to the students who got score 32 (as ceiling of the average + 2), and no vote smaller than 29. The score is lost if the student participates to one of the next exams (just sitting is enough !). The score can be registered in any of the following exam dates (even in the summer), but PLEASE do not write your name in the ESAMI platform if you want to register your exam score, just show yourself in one of those dates. |

| 10/01/20, 09:00-13:00 | room on exam's site | text, solution. | The score is lost if the student participates to one of the next exams (just sitting is enough !). The score can be registered in any of the following exam dates (even in the summer), but PLEASE do not write your name in the ESAMI platform if you want to register your exam score, just show yourself in one of those dates. |

| 07/02/20, 15:00-17:00 | room on exam's site | text, solution. | The score is lost if the student participates to one of the next exams (just sitting is enough !). The score can be registered in any of the following exam dates (even in the summer), but PLEASE do not write your name in the ESAMI platform if you want to register your exam score, just show yourself in one of those dates. |

| 12/06/20, 9:00- | virtual room on Teams | pre-test to be admitted to the oral. | The score is lost if the student participates to one of the next exams (just sitting is enough !). The score can be registered in any of the following exam dates (even in the summer), but PLEASE do not write your name in the ESAMI platform if you want to register your exam score, just show yourself in one of those dates. |

| 10/07/20, 9:00- | virtual room on Teams | pre-test to be admitted to the oral. | The score is lost if the student participates to one of the next exams (just sitting is enough !). The score can be registered in any of the following exam dates (even in the summer), but PLEASE do not write your name in the ESAMI platform if you want to register your exam score, just show yourself in one of those dates. |

Background

I strongly suggest to refresh your knowledge about basic Algorithms and Data Structures by looking at the well-known book Introduction to Algorithms, Cormen-Leiserson-Rivest-Stein (third edition). Specifically, I suggest you to look at the chapters 2, 3, 4, 6, 7, 8, 10, 11 (no perfect hash), 12 (no randomly built), 15 (no optimal BST), 18, 22 (no strongly connected components). Also, you could look at the Video Lectures by Erik Demaine and Charles Leiserson, specifically Lectures 1-7, 9-10 and 15-17.

Books, Notes, etc.

We'll use the old-fashioned blackboard and few slides. Most of the content of the course will be covered by some notes I wrote in these years; for some topics parts of papers/books will be used.

Lectures

| Date | Lecture | Biblio | |

|---|---|---|---|

| 16/09/2019 | Introduction to the course. Models of computation: RAM, 2-level memory. An example of algorithm analysis: the sum of n numbers, and binary search. The role of the Virtual Memory system. | Chap. 1 of the notes. | |

| 17/09/2019 | Finding the maximum-sum subsequence (study from my notes!). Exercise: evaluating the I/O-cost of binary search. Random sampling: disk model, known length (algorithms and proofs). | Chapter 2 (no sect 2.5-); Chap. 3 of the notes. | |

| 18/09/2019 | Random sampling on the streaming model, known and unknown length. Reservoir sampling. Algorithm and proofs. | ||

| 23/09/2019 | List Ranking: difficulties on disk, pointer-jumping technique, I/O-efficient simulation. | Chap. 4 of the notes. | |

| 24/09/2019 | Divide and Conquer for List Ranking. Randomized coin tossing to determine the independent set. Deterministic coin tossing to determine the independent set. | Chap. 4 of the notes. | |

| 25/09/2019 | Algorithm for Permuting. Sorting atomic items: sorting vs permuting, comments on the time and I/O bounds, binary merge-sort and its bounds. Multi-way mergesort. Snow Plow, with complexity proof and an example. | Chap. 5 of the notes | |

| Students are warmly invited to refresh their know-how about: Divide-and-conquer technique for algorithm design and Master Theorem for solving recurrent relations; and Binary Search Trees | Lecture 2, 9 and 10 of Demaine-Leiserson's course at MIT | ||

| 30/09/2019 | Lower bounds for sorting. The case of D>1 disks: non-optimality of multi-way MergeSort, the disk-striping technique. Quicksort: recap on best-case, worst-case. | Chap. 5 of the notes | |

| 01/10/2019 | Quicksort: Average-case with analysis. Selection of k-th ranked item in linear average time (with proof). 3-way partition for better in-memory quicksort. RandSelect. Dual Pivot QuickSort. | Chap. 5 of the notes | |

| 02/10/2019 | Bounded Quicksort; Multiway Quicksort. Selection of k-1 “good pivot” via Oversampling. Proof of the average time complexity. | Chap. 5 of the notes | |

| 07/10/2019 | Fast set intersection, various solutions: scan, sorted merge, binary search, mutual partition, binary search with exponential jumps. | Chap. 6 of the notes. | |

| 08/10/2019 | Fast set intersection: two-level scan, random shuffling. String sorting: comments on the difficulty of the problem on disk, lower bound. | Chap. 7 of the notes. | |

| 09/10/2019 | LSD-radix sort with proof of time complexity and correctness. MSD-radix sort and the trie data structure. Multi-key Quicksort. Ternary search tree. | Chap. 7 of the notes. | |

| Students are warmly invited to refresh their know-how about: hash functions and their properties; hashing with chaining. | Lectures 7 of Demaine-Leiserson's course at MIT | ||

| 14/10/2019 | Interpolation search. Hashing and dictionary problem: direct addressing, simple hash functions, hashing with chaining. | Sect 9.2 and Chap. 8 of the notes. All theorems with proof, except Theo 8.5 without proof (only the statement). | |

| 15/10/2019 | Uniform hashing and its computing/storage cost, universal hashing (definition and properties). Two examples of Universal Hash functions: one with correctness proof, the other without. | Chap. 8 of the notes. | |

| 16/10/2019 | Perfect hash table (with proof). Exercise. | Chap. 8 of the notes. | |

| 21/10/2019 | Minimal ordered perfect hashing: definition, properties, construction, space and time complexity. Exercise. | Chap. 8 of the notes. | |

| 22/10/2019 | Cuckoo hashing (with proof). Bloom Filter: properties, construction, query and insertion operations, error estimation (with proofs). | No 8.7.1-8.7.3 | |

| 23/10/2019 | Exercises | ||

| 28/10/2019 | Exercises | ||

| 29/10/2019 | Seminar on Learned Data Structures | Slides by Giorgio Vinciguerra | |

| 04/11/2019 | Randomized data structures: Treaps (with proofs). | Notes by others. Study also Theorems and Lemmas. | |

| 05/11/2019 | Randomized data structures: Skip lists (with proofs and comments on I/Os) | See Demaine's lecture num. 12 on skip lists. | |

| 06/11/2019 | Exercises | ||

| 11/11/2019 | Prefix search: definition of the problem, solution based on arrays, Front-coding, two-level indexing. Locality Preserving front coding and its use with arrays. | Chap. 9 of the notes: 9.1, 9.3. | |

| 12/11/2019 | Recap: BFS and DFS visits, Minimum Spanning Tree problem: Kruskal and Prim algorithms and analysis. Dijkstra algorithm for shortest path tree | CLR cap.23 MST SPT | |

| 13/11/2019 | Algorithms for external and semi-external computation of MST, Sybein algorithm. | Sect 11.5 of the Mehlhorn-Sander's book | |

| 18/11/2019 | Cancellata per allerta meteo | ||

| 19/11/2019 | First MidTerm exam | ||

| 20/11/2019 | Compacted tries. Analysis of space, I/Os and time of the prefix search for all data structures seen in class. More on two-level indexing of strings: Solution based on Patricia trie, with analysis of space, I/Os and time of the prefix search. Locality Preserving front coding and its use with Patricia trie. | Chap. 9 of the notes: 9.4 and 9.5. | |

| 21/11/2019 | Substring search: definition, properties, reduction to prefix search. The Suffix Array. Binary searching the Suffix Array: p log n. Suffix Array construction via qsort and its asymptotic analysis. LCP array construction in linear time. Suffix Trees: properties, structure, pattern search, space occupancy. Construction of Suffix Trees from Suffix Arrays and LCP arrays, and vice versa. Text mining use of suffix arrays. | Chap. 10 of the notes: 10.1, 10.2.1 and 10.2.2, 10.2.3 (no page 10-4 and 10-5 and thus no Lemma 10.2, no “The skew algorithm”, no “The Scan-based algorithm”),10.3, 10.3.1, 10.3.2, 10.4.3 | |

| 25/11/2019 | Exercises on MST's algorithms in internal and external memory | Sybein's exercises | |

| 26/11/2019 | Exercises on Substring search, prefix search, and LCP computation | ||

| 27/11/2019 | Correction of the MidTerm exam. Prefix-free codes, notion of entropy, optimal codes. Integer coding: the problem and some considerations. The codes Gamma and Delta, space/time performance and consideration on optimal distributions. | Chap. 11 of the notes | |

| 02/12/2019 | The codes Rice, PForDelta. Coders: (s,c)-codes, variable-byte, Interpolative. Elias-Fano. With examples. | ||

| 03/12/2019 | Huffman, with optimality (proof). Canonical Huffman: construction, properties, decompression algorithm. | Chap. 12 of the notes (no sect 12.1.2). | |

| 04/12/2019 | Arithmetic coding: properties, algorithm and proofs. Dictionary-based compressors: properties and algorithmic structure. LZ77, LZSS and LZ8. | Chap. 12 sect 12.2. No PPM and Range coding. No LZW. Chap 13, no from par 13.3 and following ones. | |

| 09/12/2019 | Canceled | ||

| 10/12/2019 | Canceled | ||

| 11/12/2019 | Exercises | ||

| 16/12/2019 | Exercises | ||

| 17/12/2019 | Second MidTerm exam | ||

| 18/12/2019 | Canceled |